Table of contents

- What is Generative AI?

- History of Generative AI

- How Does Generative AI Work?

- The Evolution of Generative AI Technology

- The Impact of Generative AI on Industries

- Benefits of Generative AI

- Best Practices in Generative AI Adoption

- Generative AI Use Cases and Applications

- The Role of AWS in Advancing Generative AI

- Amazon Generative AI Tools

- Conclusion

In the constantly evolving domain of artificial intelligence, one name stands out as a pioneer and leader: Amazon. With a relentless commitment to innovation and a diverse portfolio of services, Amazon has made significant strides in AI. Among its many groundbreaking endeavors, Amazon’s foray into Amazon generative AI has generated substantial interest and excitement within the technology community. For those wondering what generative AI tools are, AWS generative AI solutions offer an impressive suite of capabilities designed to redefine the boundaries of content creation. AWS generative AI continues to push the frontiers of AI, driving impactful solutions for businesses and developers alike.

Generative AI, often called Generative Adversarial Networks (GANs), has become a cornerstone of modern AI applications. It empowers machines to create content ranging from text and images to music, often indistinguishable from human generated content. Amazon’s foray into Amazon generative AI exemplifies its ongoing commitment to consistently push the frontiers of AI technology. By doing so, Amazon aims to deliver innovative solutions that enhance its products and services, redefine content generation, and create new possibilities for customer engagement. Amazon’s solutions provide practical applications and unmatched capabilities for those seeking to understand generative AI tools.

AWS Generative AI relies on the fundamental capabilities of large language models (LLMs). These LLMs are trained on extensive datasets encompassing text and code, enabling them to discern intricate patterns in data and harness that knowledge to generate innovative content. The answer to what generative AI tools are lies in how LLMs are developed, trained, and utilized by leading providers like Amazon to enable powerful AI driven content creation.

Amazon Generative AI employs various techniques to train and deploy LLMs effectively. One commonly used approach is to utilize distributed training frameworks like TensorFlow or PyTorch. This approach enables LLMs to be trained concurrently on multiple machines, significantly reducing the overall training time. These training methods are essential to generative AI tools, highlighting their complexity and innovation.

An equally crucial technique is incorporating dropout, a regularization method that helps prevent LLMs from overfitting to the training data. Overfitting can hinder an LLM’s generalization of new, unseen data, making dropout an invaluable tool. Amazon’s advanced approaches continue to define generative AI tools, refining models for enhanced accuracy and applicability.

Amazon Generative AI provides a range of tools and services to simplify the development and deployment of generative AI applications for developers. One such tool is Amazon Bedrock, a managed service that simplifies the deployment and scaling of foundational models. This tool exemplifies generative AI tools in action, streamlining the creation process for cutting edge applications.

In addition, Amazon SageMaker, a versatile machine learning platform, plays a pivotal role. Developers can employ Amazon SageMaker to both train and deploy LLMs. Furthermore, Amazon SageMaker offers an array of pre trained LLMs, enabling developers to kickstart their projects with minimal delay. For developers exploring what are generative AI tools, SageMaker serves as a comprehensive platform to accelerate and simplify AI innovation.

This blog will delve into Amazon’s endeavors in Generative AI Services, examining the underlying technologies, practical use cases, benefits, best practices, AWS generative AI tools, and the broader implications of this cutting edge innovation.

Talk To Our Generative AI Expert

What is Generative AI?

Generative AI, a subset of artificial intelligence, can generate diverse content, including text, images, audio, and synthetic data. Recent excitement around generative AI stems from user friendly interfaces that make it remarkably easy to craft high quality text, visuals, and videos in seconds. Amazon generative AI has emerged as a major player, providing innovative tools and models that simplify and elevate content generation processes using AWS generative AI capabilities. Increasingly, these systems rely on structured interaction standards such as the Model Context Protocol, which helps manage how models receive, retain, and interpret contextual information across complex workflows.

It’s essential to recognize that generative AI is not a recent innovation. Its origins can be dated back to the 1960s when it was first introduced as chatbots. However, it wasn’t until 2014, when generative adversarial networks (GANs), a specific category of machine learning algorithms, were introduced, that generative AI gained the capability to produce remarkably realistic images, videos, and audio of actual individuals. This newfound capacity has brought forth a range of possibilities, such as improved movie dubbing and the creation of engaging educational materials. Simultaneously, it has given rise to apprehensions surrounding deepfakes, digitally manipulated images or videos, and potential cybersecurity threats to businesses. These threats encompass deceptive requests that convincingly mimic a supervisor or manager. Amazon generative AI continues to address such challenges by implementing robust security measures and innovative solutions powered by AWS generative AI.

Two recent breakthroughs have played a pivotal role in the widespread adoption of generative AI, and we’ll delve into them further in the following sections: transformers and the revolutionary language models they’ve made possible. Amazon generative AI leverages transformers, a type of machine learning that has enabled researchers to train increasingly larger models without pre labeling all the data. This breakthrough allowed for training new models on massive volumes of text data, leading to more comprehensive and insightful responses from AWS generative AI models. The Model Context Protocol further enhances these capabilities by ensuring consistent context handling across prompts, tools, and data sources.

Moreover, transformers introduced a novel concept known as “attention,” enabling models to discern connections between words within individual sentences and across entire pages, chapters, and books. This capability extended beyond text and allowed transformers, including those used by Amazon generative AI and AWS generative AI, to analyze code, proteins, chemicals, and DNA by leveraging their ability to trace intricate connections.

The swift progress in “large language models” (LLMs), encompassing models with billions or even trillions of parameters, has ushered in a new era. In this era, AWS generative AI models possess the remarkable ability to spontaneously compose captivating text, craft lifelike images, and even produce somewhat enjoyable sitcoms. Amazon generative AI models, in particular, demonstrate unparalleled capabilities in generating contextually relevant and engaging content for diverse use cases through AWS generative AI capabilities.

Furthermore, advancements in multimodal AI have empowered teams to generate content across various media types, including text, visuals, and videos. This progress underpins tools like Dall E, which can autonomously generate images based on textual descriptions or provide text captions for images. Amazon generative AI and AWS generative AI are at the forefront of these developments, driving innovation in content creation and multimedia capabilities.

Despite these remarkable advancements, we are at the initial stages of leveraging Amazon generative AI and AWS generative AI to create coherent text and photorealistic stylized graphics. Early implementations have been marred by accuracy and bias issues, as well as occasional instances of generating nonsensical or bizarre responses. Nevertheless, the ongoing progress suggests that the inherent potential of AWS generative AI could usher in profound transformations in enterprise technology and redefine the way companies function. This technology has the potential to aid in coding, drug discovery, product development, process reengineering, and the overhaul of supply chains.

Useful link: AIOps Use Cases: How Artificial Intelligence is Reshaping IT Management

History of Generative AI

Among the pioneering instances of generative AI, the Eliza chatbot, conceived by Joseph Weizenbaum in the 1960s, holds a significant place. These early iterations adopted a rule based methodology prone to fragility due to constraints like a restricted vocabulary, inadequate context comprehension, and excessive dependence on patterns, among other limitations. Customization and expansion of these early chatbots also posed formidable challenges. This era laid the groundwork for what would later become AWS Gen AI tools.

Around 2010, the field of generative AI experienced a resurgence, driven by significant advancements in neural networks and deep learning. These breakthroughs enabled the technology to autonomously learn tasks such as text analysis, image element classification, and audio transcription, paving the way for more sophisticated AWS gen AI tools.

In 2014, Ian Goodfellow introduced Generative Adversarial Networks (GANs), a groundbreaking deep learning technique that revolutionized the field. GANs orchestrated competing neural networks to create and assess various content variations. This innovation had the potential to generate lifelike people, voices, music, and text, sparking both curiosity and concerns about the use of AWS gen AI tools in crafting realistic deepfakes that mimic voices and individuals in videos.

Subsequently, advances in other neural network techniques and architectures have further expanded the capabilities of AWS Gen AI tools. These techniques encompass variational autoencoders (VAEs), long short term memory (LSTM), transformers, diffusion models, and neural radiance fields, all of which enhance AWS gen AI tools’ potential for creating lifelike and complex outputs.

The continuous evolution and innovation of AWS gen AI tools underscore their transformative power, from generating highly realistic synthetic data to crafting immersive multimedia experiences. As a leader in this domain, AWS gen AI tools continue to shape and push the boundaries of generative AI applications across industries.

How Does Generative AI Work?

Like all artificial intelligence, generative AI employs machine learning models, specifically large models that undergo pretraining on extensive datasets.

A) Large Language Models

Large language models (LLMs) belong to a category of models known as FMs. An instance of LLMs includes OpenAI’s generative pre trained transformer (GPT) models. These LLMs are primarily designed to excel in language related tasks like summarization, text generation, classification, open ended conversation, and information extraction.

LLMs’ distinguishing feature is their versatility in handling multiple tasks. This capability is attributed to their extensive parameterization, which enables them to grasp and master complex concepts.

LLMs such as GPT 3 boast an impressive capacity to contemplate billions of parameters, allowing them to produce content even with minimal input. Their exposure to vast and diverse internet data during pretraining equips LLMs with the capability to apply their acquired knowledge across a broad spectrum of contexts.

B) Foundation Models

AWS Foundation models (FMs) are machine learning models that undergo training on extensive, diverse, and unlabeled datasets. These models excel in various general tasks.

AWS Foundation models (FMs) represent the culmination of technological progress over several decades. These models utilize acquired patterns and associations to forecast the subsequent item within a sequence.

In the case of image generation, the model examines the image and produces an enhanced, more sharply defined rendition of it. Similarly, the model foresees the following word in a text string for text generation by considering the preceding words and their context and subsequently chooses the next word through probability distribution methods.

The Evolution of Generative AI Technology

Generative models with roots in statistics have been employed for many years to assist in the analysis of numerical data. More recently, the emergence of neural networks and deep learning laid the foundation for contemporary generative AI. Notably, in 2013, Variational Autoencoders (VAEs) marked a significant milestone as the initial deep generative models capable of producing lifelike images and speech. This evolution has contributed to the development of AWS Gen AI tools, which provide enhanced capabilities for various data driven applications.

The advent of Variational Autoencoders (VAEs) introduced a groundbreaking ability to generate fresh variations across diverse data types. This development triggered the swift proliferation of additional generative AI models, including Generative Adversarial Networks (GANs) and diffusion models, further expanding the range of AWS gen AI tools. These advancements were centered on producing data that closely resembled accurate data, even though it was synthetically generated, demonstrating the robust capabilities of AWS gen AI tools.

The landscape of AI research underwent a notable transformation in 2017 with the introduction of transformers. These transformers effectively merged the encoder decoder architecture with an attention mechanism, revolutionizing the training of language models by significantly enhancing efficiency and adaptability. Distinctive models such as GPT emerged as fundamental models, adept at pretraining on extensive collections of unprocessed text data and fine tuning for various tasks. This technological leap has been instrumental in shaping AWS gen AI tools for diverse and sophisticated generative tasks.

Transformers brought about a transformative shift in natural language processing, expanding the horizons of generative capabilities. These models enabled handling tasks spanning translation, summarization, and question answering, with AWS Gen AI tools optimizing such functionalities for a broad range of applications across industries.

Numerous generative AI AWS models continue to advance and find applications across various sectors. Current innovations are concentrated on enhancing these models for compatibility with proprietary data, ensuring that AWS gen AI tools remain adaptable and powerful. Researchers are also committed to elevating the quality of generated text, images, videos, and speech, making them increasingly human like, further advancing the utility and effectiveness of AWS gen AI tools.

Useful link: AIOPS Solutions: Enhancing DevOps with Intelligent Automation for Optimized IT Operations

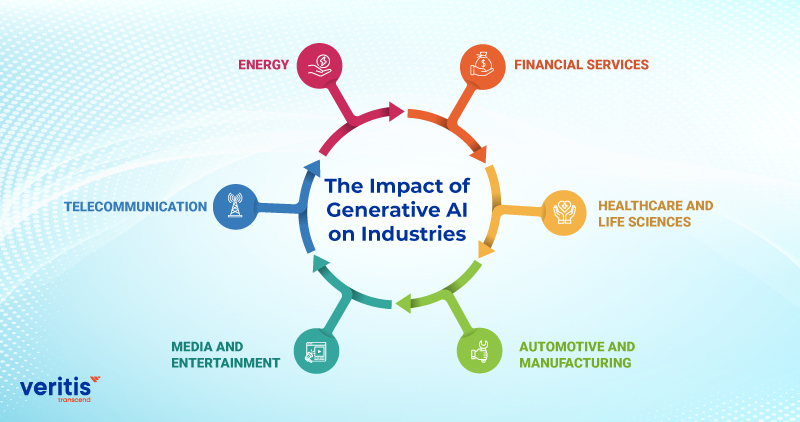

The Impact of Generative AI on Industries

Although generative AI has the potential to influence all industries in the long run, specific sectors are primed to reap the rewards of this technology swiftly.

1) Financial Services

Financial services firms can leverage generative AI to enhance customer service and reduce operational expenses in several ways:

- Enhanced Customer Service: Financial institutions can deploy chatbots to offer product recommendations and address customer queries promptly, improving customer satisfaction overall.

- Accelerated Loan Approvals: Lending organizations can expedite the loan approval process, particularly in underserved financial markets, which is particularly beneficial in developing countries.

- Fraud Detection: Banks can swiftly identify fraudulent activities related to claims, credit cards, and loans, bolstering security and trust.

- Personalized Financial Advice: Investment firms can harness generative AI to deliver personalized and cost effective financial guidance to their clients, enhancing their financial well being.

2) Healthcare and Life Sciences

One of the compelling applications of generative AI lies in expediting drug discovery and research. Generative AI utilizes models to generate innovative protein sequences tailored for developing antibodies, enzymes, vaccines, and gene therapy with specific properties.

In the healthcare and life sciences sectors, Generative AI in Drug Discovery is also invaluable for crafting synthetic gene sequences to support applications in synthetic biology and metabolic engineering. For instance, these models can be employed to design novel biosynthetic pathways or optimize gene expression, particularly for biomanufacturing purposes.

Furthermore, generative AI is crucial in generating synthetic patient and healthcare data. This data is instrumental in training AI models, simulating clinical trials, and investigating rare diseases, especially in scenarios where access to extensive real world datasets may be limited.

3) Automotive and Manufacturing

Automotive corporations can harness the potential of generative AI technology for a multitude of applications, spanning from engineering enhancements to in vehicle experiences and customer support. To illustrate, they can streamline the design of mechanical components, thereby reducing aerodynamic drag in vehicle configurations or refining the design of personal assistants.

Furthermore, automotive companies are capitalizing on generative AI to elevate customer service, delivering swift responses to frequently asked questions. Additionally, this technology empowers the creation of novel materials, chipsets, and component designs, optimizing manufacturing procedures and cutting production costs.

Generative AI also finds utility in creating synthetic data for testing applications. This proves particularly valuable when dealing with data scenarios that are infrequently encompassed in testing datasets, such as defect detection or edge cases.

4) Media and Entertainment

Generative AI models can generate new content across various domains, encompassing animations, scripts, and even full length movies, all at a considerably reduced cost and time compared to conventional production methods.

Here are additional applications of generative AI within the industry:

- Musicians and artists can augment their albums with AI generated music to craft novel and immersive experiences.

- Media entities can harness generative AI to enhance audience engagement by providing personalized content and advertisements, ultimately driving revenue growth.

- Gaming enterprises can leverage generative AI to innovate game development and enable players to create and customize their avatars, enriching the gaming experience.

5) Telecommunication

In the initial stages of applying generative AI within the telecommunications industry, the primary focus revolves around transforming the customer experience. This experience encompasses all subscriber interactions throughout the entire customer journey.

For instance, telecommunication companies have the potential to utilize generative AI to enhance customer service by introducing conversational agents that emulate human like interactions. Additionally, generative AI can be leveraged to enhance network performance by analyzing network data and recommending improvements. Furthermore, it enables a reimagining of customer relationships by offering personalized one on one sales assistants.

6) Energy

Generative AI proves highly valuable in energy sector applications that require intricate analysis of raw data, pattern recognition, predictive modeling, and optimization. Energy companies can enhance customer service by delving into enterprise data to discern consumption patterns. With this valuable information, they can tailor product offerings, design energy efficient programs, and initiate demand response strategies.

Generative AI also serves as a vital tool in grid management, contributing to heightened operational site safety and the optimization of energy production, mainly through advanced reservoir simulations.

Useful link: Veritis Triumphs With the Acclaimed Stevie Award for Cloud Infrastructure

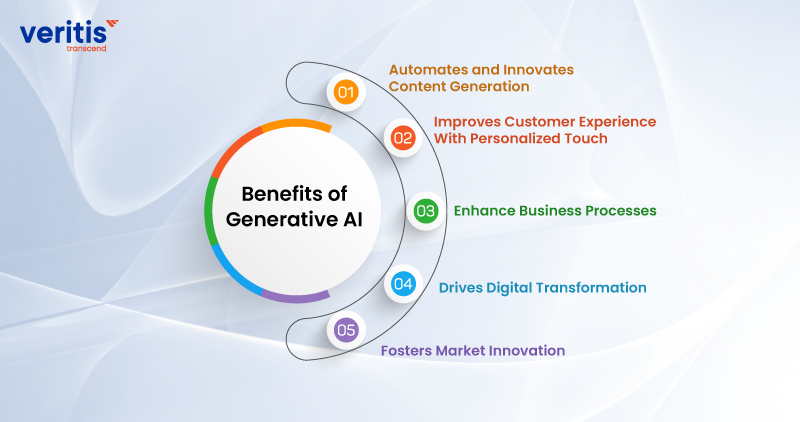

Benefits of Generative AI

Generative AI systems offer numerous advantages. Here, we’ll explore some of the most notable improvements that generative AI applications facilitate.

1) Automates and Innovates Content Generation

Generative AI finds significant utility in content creation, a primary area of interest for many businesses. Marketing teams invest substantial efforts in generating fresh content, including marketing copy, blog articles, social media updates, and graphic design.

AI can assist in these tasks effectively. Generative AI tools can follow specific directives. For example, if you require a landing page, you can instruct your AI text generator to compose an introductory paragraph that addresses your customers’ challenges and connects them with potential solutions offered by your product.

These functionalities empower companies to automate content generation and foster innovation. Experiment with these AWS AI tools by feeding them novel concepts as input. Observe how they can take your proposals and generate further ideas. This can lead to a collaborative exchange where you refine the AI generated concepts, gradually evolving them into actionable and valuable ideas.

2) Improves Customer Experience With Personalized Touch

Another avenue through which AI enhances business operations is by tailoring customer engagements. Generative AI in Customer Experience enables organizations to better understand their company’s offerings and services while aligning them with individual customer expectations. When coupled with the customer data at your disposal, it allows businesses to create highly personalized experiences by applying generative AI techniques.

For example, consider an e-commerce enterprise that maintains information regarding customer demographics and their probable product preferences. By aligning these preferences with potential customers, such organizations can leverage Generative AI in Customer Experience to craft personalized content, resulting in more relevant and timely product recommendations.

Ultimately, this approach culminates in an enhanced customer experience, ensuring that customers receive products and interactions that are best tailored to their preferences and needs.

3) Enhance Business Processes

AI contributes to optimizing business operations by streamlining processes. Identifying tasks that can be automated and employing AI for data generation can alleviate the workload of your workforce, resulting in increased daily productivity.

An illustrative instance of this is report analysis. Business managers are tasked with examining comprehensive reports containing data about their company and the market. They invest substantial time in the analysis process to fully comprehend the information within these reports.

A notable feature of contemporary large language models (LLMs) is their proficiency in data analysis and deriving insights. Numerous applications enable users to input text reports into AI text generators, generating concise summaries. Additionally, there is software that facilitates conversational interactions with PDF documents, streamlining the summarization process.

4) Drives Digital Transformation

Generative AI has the potential to steer businesses towards digital transformation due to the wealth of data it provides to companies, thereby enhancing the decision making capabilities of leaders. Consider a construction firm that might not be inclined to invest in technology. Given their substantial fieldwork, they may have limited engagement with technological tools.

However, the situation shifts when they implement machine learning AI algorithms for equipment analysis. The introduction of AI for predictive maintenance empowers companies to proactively address equipment issues, allowing them to invest in digital transformation with a compelling reason – ensuring equipment remains in optimal condition before any issues arise.

5) Fosters Market Innovation

Enterprises looking to establish their presence in their respective industries can harness generative AI to gain valuable insights from extensive datasets, often too vast for humans to analyze comprehensively due to time constraints. This translates into increased opportunities for market innovation, including developing new products and service offerings, responding to potential market shifts, and acquiring additional valuable insights.

Furthermore, generative AI plays a pivotal role in risk reduction associated with innovation. It provides a deeper understanding of consumer preferences, mitigating uncertainties in new product development and offering businesses a competitive edge by aligning their ideas more effectively with their target audience.

Useful link: How Does AI Work and How Can it Help Leaders Make Better Decisions

Best Practices in Generative AI Adoption

When your company aims to integrate generative AI solutions, it’s essential to contemplate the following best practices to optimize your endeavors.

1) Commence with Internal Applications

Your journey into generative AI adoption should ideally begin with internal application development. In this phase, prioritize process optimization and enhance employee productivity. Internal applications provide a controlled environment for rigorous outcome testing, skill development, and deeper understanding of technology. This approach enables comprehensive model testing and customization using internal knowledge sources, providing a more robust foundation for effective model deployment.

By following this approach, you ensure your customers will enjoy a significantly improved experience when implementing these models in external applications.

2) Improve Transparency

Establishing clear communication regarding all generative AI applications and their outcomes is essential. This ensures your users know they interact with AI entities rather than humans. One practical approach is for the AI to introduce itself explicitly as AI and AI generated search results can be distinctly labeled and emphasized.

This transparency empowers users to exercise their judgment when interacting with the content, making them more proactive in addressing potential inaccuracies or hidden biases that may arise from limitations in the underlying model’s training data.

3) Implement Security

Establish safeguards within your generative AI applications to prevent unauthorized access to sensitive data. Engaging security teams from the outset is crucial to comprehensively address all aspects. This may entail data masking and removing personally identifiable information (PII) before initiating any model training on internal data.

4) Thorough Testing is Essential

Establish automated and manual testing protocols to validate outcomes across a wide range of scenarios the generative AI system may encounter. Engage distinct sets of beta testers to assess applications from various perspectives and meticulously record the results. This continuous testing approach contributes to ongoing model improvement and provides greater control over expected outcomes and responses.

Generative AI Use Cases and Applications

Generative AI is causing considerable disruptions across various industries, impacting businesses in numerous ways. Let’s delve into some of the most notable use cases.

1) Generating Content: Images, Music, and Videos

Generative AI models, like Generative Adversarial Networks (GANs), can produce new content encompassing images, videos, and music. The effectiveness of widely recognized tools like Dall-E, Stable Diffusion, and Midjourney demonstrates that the generation of visual content stands as one of the most prominent applications within generative AI.

Increasing attention is directed toward enhancing the caliber and variety of content produced. Scientists are delving into methods for utilizing AI to transform static 2D images into 3D scenes. For example, consider the cloud based generative AI model NVIDIA’s Picasso, which specializes in generating high quality, photorealistic visuals, videos, and 3D assets.

AI generated art has found its place within the prestigious Museum of Modern Art in New York City. Renowned artist Refik Anadol employed an advanced machine learning model to analyze publicly accessible visual and informational data from MoMA’s collection.

2) Automated Custom Software Engineering

Generative AI also finds applications in software engineering, where it plays a role in automating the development of tailored software solutions. For instance, GPT 3 can produce code by interpreting natural language descriptions, streamlining the software development process.

Current research efforts are dedicated to enhancing the precision and effectiveness of code generation. As an example, researchers are actively working on AI models designed to comprehensively grasp the context of the code, leading to the production of code that is not only more accurate but also more efficient.

3) Writing Assistance

Generative AI models like GPT 3 and GPT 4 play a pivotal role in various writing tasks, generating human like text, which proves valuable in crafting emails, articles, and other written content. Notably, there’s a growing emphasis on ongoing research efforts to enhance the coherence and pertinence of the generated text.

4) Text to Speech Solution

Generative AI models are instrumental in converting text into speech, enabling the generation of lifelike human speech. These models find application in voice assistants, text to speech functionalities, and other speech related tasks.

Contemporary research endeavors concentrate on enhancing the naturalness and expressiveness of the generated speech. Leading companies, including Google, harness the power of AI in their text to speech solutions. Google’s Text to Speech service, for instance, employs AI to transform text into natural sounding speech, offering a wide array of voices in over 40 languages.

5) Product Design and Development

Generative AI models are pivotal in product design and development by generating innovative design concepts. They can learn from existing designs and accelerate the design phase significantly.

Ongoing research in this domain is primarily geared toward enhancing the quality and diversity of the generated designs. Research is in progress to develop models capable of generating designs that not only satisfy aesthetic criteria but also fulfill functional requirements. In practical applications, companies like Autodesk have embraced AI to support product design. Autodesk’s tool, Dreamcatcher, employs AI to create design alternatives tailored to the designer’s specific criteria.

6) Gaming Solutions

Generative AI models find applications in the gaming industry to create fresh game components, including levels, characters, and other elements. These models can draw insights from pre existing game components and produce novel ones, infusing games with diversity and innovation.

Ongoing research endeavors aim to develop models that generate game levels characterized by visual allure and a harmonious blend of challenge and entertainment. Prominent companies such as Ubisoft harness the power of AI in game development. Ubisoft’s tool, Commit Assistant, leverages AI to anticipate and rectify bugs within the game code, enhancing the development process.

7) Healthcare Solutions

Generative AI in healthcare models plays a crucial role by generating novel drug molecules and predicting disease progression, among other applications. These models leverage existing medical data to derive fresh insights, thereby expediting research and treatment processes.

Ongoing research efforts concentrate on enhancing the precision and pertinence of generated medical insights. For instance, researchers are dedicated to developing models to predict disease progression more accurately and generate more potent drug molecules. Esteemed organizations like DeepMind are actively integrating AI into healthcare research. DeepMind’s tool, AlphaFold, harnesses AI for predicting proteins’ 3D structures, a critical aspect in comprehending diseases and advancing drug development.

8) Enhance Customer Experience

A) Chatbots and Virtual Assistants

Efficiently optimize customer self service procedures and cut operational expenses by implementing generative AI driven chatbots, voice bots, and virtual assistants to automate responses for customer service inquiries.

B) Agent Assistance and Conversation Evaluation

Boost agent effectiveness to enhance first contact resolution and augment their capabilities in knowledge retrieval, call summarization, and issue resolution. Additionally, managers can extract valuable insights to enhance the customer experience, closely monitor agent performance, and drive overall business improvements.

C) Personalization

Enhance customer engagement by delivering tailored offerings and communications.

9) Enhancing Employee Efficiency

A) Conversational Search

Elevating employee productivity involves enabling them to access and utilize accurate information efficiently. A conversational interface streamlines this process, making it easier for employees to interact with data and quickly summarize content, improving their work efficiency and effectiveness.

B) Code Generation

Speed up the process of application development by providing code recommendations that stem from the developer’s comments and existing code.

C) Automated Report Generation

Generative AI can automate the creation of financial reports, summaries, and projections, resulting in time savings and decreased potential errors.

10) Improve Content Creation and Creativity

A) Marketing

Generative AI streamlines marketing content creation by automating tasks such as blog posts and social media updates. This approach saves time and valuable resources while ensuring a consistent online presence. It enhances productivity and cost efficiency for businesses engaging with their audience.

B) Product Development

AI can swiftly create numerous design prototypes with input and constraints, expediting the ideation process. It can also enhance existing designs through user feedback and defined constraints.

C) Sales

Create personalized emails and messages tailored to a prospect’s profile and actions, enhancing response rates. Likewise, generate sales scripts and talking points customized to the customer’s segment, industry, and the specific product or service being offered.

11) Speed Up Process Optimization

A) Document Processing

Enhance business operations through the automated extraction and summarization of data from documents, facilitated by generative AI powered question and answering capabilities.

B) Supply Chain Optimization

Both Cost reduction and efficiency can be optimized by evaluating and optimizing various supply chain scenarios, considering factors such as transportation routes and inventory management.

C) Data Augmentation

Create synthetic data for training ML models, mainly when the original dataset is limited, imbalanced, or contains sensitive information.

Useful link: Anomaly Detection with MI & AI : An Introduction

The Role of AWS in Advancing Generative AI

Amazon Web Services (AWS) simplifies the development and expansion of generative AI applications tailored to your data, use cases, and customer needs. When utilizing AWS generative AI, you gain the assurance of top tier security and privacy standards, access to cutting edge Foundation Models (FMs), generative AI driven applications, and a data centric strategy.

Select from various AWS gen AI tools and technologies that cater to organizations at every phase of generative AI adoption and growth.

- Code generation is a highly promising application for AWS gen AI tools. Amazon’s AI coding companion, CodeWhisperer, substantially improves developer productivity. In a preview phase, Amazon Generative AI tools conducted a productivity challenge, and participants utilizing CodeWhisperer demonstrated a 27 percent higher likelihood of accomplishing tasks. On average, they completed tasks 57 percent faster than those who did not utilize CodeWhisperer.

- Amazon Bedrock represents yet another comprehensive managed service, providing a selection of high performance Foundation Models (FMs) and an extensive range of functionalities. It facilitates seamless experimentation with diverse top FMs, allows private customization with your specific data, and enables the creation of managed agents capable of executing intricate business tasks using AWS gen AI tools.

- Amazon SageMaker JumpStart is an additional resource for uncovering, investigating, and deploying open source Foundation Models (FMs), or crafting customized ones. It offers managed infrastructure and tools to expedite the development, training, and secure deployment of scalable and dependable models, making it a valuable part of AWS gen AI tools.

- AWS HealthScribe is a HIPAA eligible service designed to empower healthcare software providers to create clinical applications that automatically generate clinical notes by analyzing patient clinician interactions. This innovative offering combines speech recognition with AWS gen AI tools to alleviate the challenges associated with clinical documentation. It accomplishes this by transcribing patient clinician conversations and generating clinical notes that are easier to review.

- Amazon QuickSight’s Generative BI authoring features facilitate the seamless creation and personalization of visuals by business analysts using intuitive natural language commands. These newly introduced Generative BI authoring capabilities expand upon QuickSight Q’s natural language querying capabilities, extending them beyond addressing structured questions. AWS gen AI tools further enhance this powerful capability, aiding analysts in rapidly crafting customizable visuals, refining visualizations, seeking clarification on query intent through follow up inquiries, and executing intricate calculations.

AWS gen AI tools continue to push the boundaries of innovation in generative AI, providing organizations with tailored solutions to streamline and enhance their data driven capabilities across various industries.

Amazon Generative AI Tools

Amazon’s Amazon Generative AI tools offer innovative solutions for various applications. These tools empower businesses to harness the potential of generative AI on AWS, providing efficiency, personalization, and data driven insights.

1) Amazon Bedrock

Amazon Bedrock, a fully managed service, provides an array of high performing foundation models (FMs) sourced from leading AI companies, including Meta, AI21 Labs, Anthropic, Stability AI, Cohere, and Amazon. It offers these models through a unified API while encompassing a wide range of essential capabilities for developing Amazon Generative AI tools applications. This simplifies the development process while upholding privacy and security standards.

With the robust feature set of Amazon Bedrock, users can easily experiment with different top FMs. Additionally, they can customize these models using techniques like fine tuning and retrieval augmented generation (RAG) while ensuring the confidentiality of their data. Moreover, Amazon Bedrock empowers users to create managed agents capable of executing intricate business tasks, from travel booking insurance claims processing to ad campaign creation and inventory management. Notably, this can be achieved without the need to delve into coding, and Amazon Bedrock’s serverless nature eliminates the burden of infrastructure management. As a result, users can seamlessly and securely integrate generative AI capabilities into their applications, leveraging the familiarity of Amazon Generative AI tools.

Benefits of Amazon Bedrock

A) Selection of Top Tier Foundation Models

Amazon Bedrock provides an intuitive developer experience for accessing a diverse selection of high performing foundation models (FMs) offered by prominent AI companies, including Cohere, Meta, AI21 Labs, Anthropic, Stability AI, and Amazon Generative AI tools. Users can easily experiment with various FMs in the provided playground and utilize a unified API for inference, ensuring seamless compatibility with different models from various providers. This approach lets users stay up to date with the latest model versions while minimizing the need for extensive code modifications.

B) Simplified Data Based Model Customization

Effortlessly tailor foundation models (FMs) to your specific data needs privately using an intuitive interface, eliminating the need for coding. You can optimize model performance by choosing your training and validation data from Amazon Simple Storage Service (Amazon S3) and fine tuning hyperparameters as necessary.

C) Managed Agents for Seamless API Driven Task Execution

Develop agents that perform intricate business operations, including travel booking, insurance claims processing, ad campaign creation, tax filing preparation, and inventory management. These fully managed agents within Amazon Bedrock expand upon the cognitive abilities of FMs, enabling them to analyze tasks, devise orchestration strategies, and carry them out effectively, leveraging Amazon Generative AI tools.

D) Leveraging Native RAG Support to Enhance FM Capabilities With Proprietary Data

Leverage Amazon Bedrock’s Knowledge Bases to securely link your FMs with your data sources, enabling retrieval augmentation directly from the managed service. This enhances your FMs’ capabilities and deepens their understanding of your domain and organizational context using Amazon Generative AI tools.

E) Ensuring Data Security and Compliance Certifications

Amazon Bedrock provides a range of features to ensure data security and privacy, including HIPAA eligibility and GDPR compliance. Your data is safeguarded as it is neither utilized to enhance base models nor shared with external model providers. Encryption is enforced for data both in transit and at rest, and you can employ your encryption keys. For added security, you can establish private connectivity between FMs and your Amazon Virtual Private Cloud (Amazon VPC) using AWS PrivateLink, eliminating the exposure of your traffic to the public Internet. This data security focus further extends the value of Amazon Generative AI tools.

2) Amazon SageMaker

Amazon SageMaker is a comprehensive machine learning service that simplifies the process for data scientists and developers to establish, train, and deploy machine learning models in a production ready hosted setting. It includes an integrated Jupyter authoring notebook instance for convenient data access, eliminating the need for server management. SageMaker offers efficient machine learning algorithms optimized for large scale distributed data processing. Furthermore, it provides native support for custom algorithms and frameworks, granting flexible distributed training options to suit your specific workflows. You can effortlessly deploy a model in a secure and scalable environment via a few clicks in SageMaker Studio or the SageMaker console. Amazon Generative AI tools play a key role in accelerating these processes and providing state of the art capabilities.

Benefits of Amazon SageMaker

A) Faster Time to Market

SageMaker empowers developers and data scientists to swiftly create, train, and deploy machine learning models. This expedites product and service launches, enabling organizations to respond rapidly to market dynamics and stay ahead in competitive industries. The platform’s streamlined workflow accelerates research, development, and the introduction of innovative solutions, a pivotal advantage enhanced by Amazon Generative AI tools in the fast evolving business landscape.

B) More Integrated Algorithms and Frameworks

SageMaker offers a comprehensive selection of integrated algorithms and frameworks, which encompass popular machine learning tools like TensorFlow, PyTorch, and MXNet. This rich set of resources simplifies the initial stages of embarking on machine learning projects, enabling users to kickstart their endeavors more smoothly and efficiently. By providing a wide array of these tools within SageMaker, Amazon Generative AI tools significantly reduce the barriers for individuals and teams looking to delve into machine learning, fostering faster and more accessible model development.

C) Automated Model Optimization

SageMaker includes an automated model tuning capability, which streamlines the process of hyperparameter optimization to enhance model performance. This feature, integral to Amazon Generative AI tools, significantly reduces the time and manual effort required to fine tune machine learning models.

D) Labeling Service With Ground Truth

SageMaker offers Ground Truth, a labeling service that facilitates accurate and efficient data labeling for machine learning tasks, a feature also supported by Amazon Generative AI tools.

E) Build in Model Monitoring

SageMaker offers integrated model monitoring, a feature designed to monitor ML models in a production environment constantly. This monitoring system provides real time alerts when performance issues are detected, enabling continuous optimization powered by Amazon Generative AI tools.

Useful link: How AI Adoption Will Transform Your Business

3) Amazon EC2 UltraClusters

Amazon Elastic Compute Cloud (Amazon EC2) UltraClusters can seamlessly scale up your computational power with thousands of GPUs or specialized ML accelerators like AWS Trainium, delivering instant access to supercomputer level performance. These UltraClusters make supercomputing class capabilities accessible to developers working on machine learning, Amazon Generative AI tools, and high performance computing (HPC) projects. The usage model is straightforward and based on a pay as you go approach, eliminating the need for complex setup and ongoing maintenance costs. Amazon Generative AI tools benefit from the robust scaling and flexibility offered by EC2 UltraClusters, enhancing their ability to handle intensive AI workloads.

EC2 UltraClusters are comprised of a multitude of accelerated EC2 instances strategically located within a designated AWS Availability Zone. They are interconnected through Elastic Fabric Adapter (EFA) networking, creating a non blocking network at a petabit scale. Moreover, EC2 UltraClusters grant access to Amazon FSx for Lustre, a fully managed shared storage solution built on a renowned high performance, parallel file system. This empowers the rapid processing of extensive datasets on demand and at a significant scale, all with the advantage of sub millisecond latencies. Amazon Generative AI tools leverage these high performance features to process and train models faster and more efficiently.

The exceptional scale out capabilities of EC2 UltraClusters make them an industry leader, particularly suitable for distributed machine learning training and closely coupled high performance computing (HPC) workloads. This scalability allows Amazon Generative AI tools to be deployed seamlessly, ensuring that even the most complex tasks can be handled with ease. Amazon Generative AI tools on EC2 UltraClusters provide developers with unparalleled computing resources, enabling rapid experimentation and deployment of next generation AI solutions.

Amazon EC2 P5 and Trn1 instances are built on a second generation EC2 UltraClusters architecture. This architecture incorporates a network fabric designed to minimize the number of hops within the cluster, resulting in reduced latency and increased scalability. By leveraging this architecture, Amazon Generative AI tools achieve even greater performance, ensuring they remain at the forefront of innovation in the AI and machine learning space.

Benefits of Amazon EC2 UltraClusters

A) Accelerating Time to Solution for Distributed Training and High Performance Computing

EC2 UltraClusters is a game changer, significantly slashing the time needed for training and high performance computing tasks from weeks to days. Their extraordinary scaling capabilities and rapid networking drastically speed up the development cycle. As a result, businesses can swiftly bring deep learning, generative AI AWS, and high performance computing applications to market, enhancing their competitiveness and innovation in demanding industries.

B) Immediate Availa0bility of an Exascale Supercomputer

P5 instances are integrated into EC2 UltraClusters, which can accommodate up to 20,000 H100 GPUs, providing an impressive aggregate compute capability exceeding 20 exaflops. Similarly, Trn1 instances have the scalability to support up to 30,000 Trainium accelerators, while P4 instances can scale to accommodate 10,000 A100 GPUs, delivering exascale computing capabilities on a flexible, on demand basis.

C) Enhanced Flexibility for Performance and Cost Optimization

EC2 UltraClusters are compatible with an expanding range of EC2 instances, allowing you to select the most suitable compute option for optimizing performance without compromising cost effectiveness for your specific workload. This adaptability ensures you can strike the right balance between high performance computing and cost efficiency, tailoring your infrastructure to your unique needs.

4) Amazon QuickSight

Amazon QuickSight provides data driven organizations with scalable and unified business intelligence (BI) solutions. It empowers users to fulfill diverse analytical requirements using interactive dashboards, paginated reports, embedded analytics, and natural language queries, all from a single, reliable data source.

Amazon QuickSight is a rapid, cloud based business intelligence service designed to provide insights to all members of your organization. It is a fully managed service that simplifies creating and sharing interactive dashboards, complete with machine learning (ML) insights.

Benefits of Amazon QuickSight

A) Empowering Every User With Business Intelligence

Address the diverse analytical requirements of your entire user base by providing them with a unified source of accurate data. This is made possible by deploying interactive dashboards, paginated reports, embedded analytics, and the ability to perform natural language queries. These versatile tools enable users across your organization to extract valuable insights, empowering them to make data driven decisions and enhance efficiency.

B) Accelerate Development

Accelerate your development process with a unified authoring experience that enables the creation and sharing of insights through various mediums like modern dashboards, paginated reports, and embedded analytics. This unified approach streamlines the development cycle, making it more efficient and reducing the time required to build and share valuable insights. It offers a cohesive solution for meeting diverse data analysis needs within your organization while maintaining consistency and speed.

C) Efficiently Expand Your Operations

Quickly expand your system’s capacity to accommodate tens of thousands of users without the complexities of server setup, configuration, or management. This scalability feature allows your platform to efficiently g0row and handle increased demand while minimizing the administrative overhead.

D) Minimize Costs With Pay As You Go Pricing

QuickSight delivers cost efficiency through flexible usage based pricing, eliminating the need to procure numerous end user licenses for extensive business intelligence and embedded analytics implementations. With this pricing model, expenses are directly tied to resource utilization, allowing for precise control and allocation of financial resources, regardless of your organization’s size. This approach minimizes upfront financial commitments and maximizes the value derived from your business intelligence initiatives.

5) Amazon Personalize

An entirely managed Machine Learning (ML) solution that utilizes your data to produce item recommendations tailored to your users. This service simplifies the development of applications for a diverse range of personalization applications and automates numerous intricate processes involved in constructing, training, and deploying ML models. It harnesses the advanced ML technology employed by Amazon generative AI, making it accessible without extensive ML expertise.

Amazon Personalize empowers developers to swiftly create and implement personalized recommendations and sophisticated user segmentation at a large scale through machine learning (ML). Its adaptability allows you to provide the optimal customer experience, precisely timed and located, tailored to your unique requirements.

Amazon Personalize expedites your digital transformation using machine learning (ML), simplifying and integrating personalized recommendations into your current websites, applications, email marketing systems, and beyond.

Benefits of Amazon Personalize

A) Recommendations

Amazon Personalize employs advanced machine learning algorithms to tailor recommendations that adapt to users’ unique requirements, preferences, and evolving behaviors. This personalized approach ensures that users receive content, services, or products that are highly relevant to their individual needs, thereby enhancing their experience and engagement with your platform.

B) User Segmentation

Amazon Personalize provides automated user segmentation based on various factors, including users’ interests in specific product categories, preferred brands, and more. This segmentation allows you to categorize and target users more effectively, tailoring your recommendations to match their unique preferences. Whether users have distinct interests in electronics, fashion, or other categories, Amazon Personalize enables you to fine tune your offerings for a more personalized and engaging experience.

C) Lead Generation Initiatives

Amazon Personalize enhances the efficiency of your lead generation campaigns across various marketing channels. By using machine learning algorithms, it can provide insights into users’ preferences and behaviors, allowing you to tailor your campaigns for improved prospect targeting. This means you can reach clients more effectively, increasing the chances of conversion and achieving better results in your marketing efforts.

D) Customer Experience

Amazon Personalize empowers you to provide tailored customer experiences by deploying recommendations and user segmentation at optimal moments and locations throughout their journey, ensuring a personalized approach for each individual.

6) Amazon Polly

Amazon Polly employs advanced deep learning techniques to create natural and human like speech synthesis, allowing you to transform written text into spoken words. Featuring a diverse range of lifelike voices in multiple languages, Amazon Polly is a versatile tool for developing speech enabled applications.

Whether it’s converting written articles into audio for accessibility, enhancing user interactions with voice commands, or creating voiceovers for multimedia content, Amazon Polly empowers you to leverage the power of speech synthesis in your applications. It provides a seamless and engaging way to make content more accessible, interactive, and dynamic.

Amazon Polly finds applications in many scenarios, including mobile apps for eLearning, news readers, interactive games, accessibility tools for the visually impaired, and the burgeoning realm of the Internet of Things (IoT). It showcases an array of Neural Text to Speech (NTTS) voices that continually advance in speech quality thanks to innovative machine learning techniques, delivering exceptionally natural and human like text to speech experiences.

Additionally, Amazon Polly incorporates a distinctive Newscaster speaking style tailored for news narration applications, adding an extra layer of versatility to its capabilities. Whether it’s enhancing user experiences, improving accessibility, or adding a touch of human like narration to content, Amazon Polly proves to be a valuable tool for a broad spectrum of applications.

Benefits of Amazon Polly

A) Cloud Based Solution

On device Text to Speech (TTS) solutions demand substantial computational resources, particularly CPU power, RAM, and storage capacity. This can increase development expenses and greater power consumption, especially on tablets and smartphones. Conversely, utilizing AWS Cloud for TTS conversion significantly diminishes the local resource prerequisites. This allows for comprehensive language and voice support, all while maintaining top tier quality. Furthermore, any enhancements in speech quality become readily accessible to end users without necessitating additional device updates.

B) Extensive Language and Voice Portfolio Support

Amazon Polly supports numerous languages and offers many male and female voice versions for most languages. Neural Text to Speech (TTS) currently offers three British and eight US English voices, with plans for further expansion. Additionally, US English voices Matthew and Joanna can employ the Neural Newscaster speaking style, akin to the delivery of professional news anchors.

C) Enhanced Quality

Amazon Polly provides advanced text to speech technology encompassing state of the art neural TTS and top quality standard TTS. These technologies deliver highly natural sounding speech with exceptional pronunciation accuracy. They excel in handling various linguistic intricacies, including but not limited to abbreviations, acronym expansions, date/time interpretations, and homograph disambiguation. This ensures that the synthesized speech is not only lifelike but also linguistically precise, making it a valuable tool for various applications requiring high quality voice output.

D) Low Latency

Amazon Polly delivers swift responses, positioning it as an ideal choice for scenarios that demand low latency performance, particularly applications like dialog systems. Its ability to provide rapid and responsive text to speech synthesis enhances the user experience in real time interactions. Whether for voice assistants, customer service chatbots, or any other application that requires immediate spoken responses, Amazon Polly ensures that users receive information and interactions with minimal delay, contributing to a seamless and efficient user experience.

E) Cost Effective

Amazon Polly operates on a cost effective pay per use model, eliminating the need for initial setup costs. This flexible pricing structure allows you to begin with small scale implementations and quickly expand as your application grows.

The pay as you go approach ensures that you only incur charges for the services you actively use, making it a budget friendly choice for integrating high quality text to speech capabilities into your applications. Whether you’re a startup or a large enterprise, this cost effective model adapts to your specific needs, enabling you to manage expenses efficiently while delivering excellent speech synthesis.

7) Amazon CodeWhisperer

Amazon CodeWhisperer is a machine learning (ML) powered solution to boost developer productivity. It achieves this by providing code recommendations based on developers’ natural language comments and their existing code within the IDE. Streamline both frontend and backend development by empowering developers with automated code suggestions. With CodeWhisperer, you can save valuable time and resources when generating code for building and training your ML models.

CodeWhisperer has been trained on an extensive dataset comprising billions of lines of code. It can provide real time code recommendations that cover a broad spectrum, from code snippets to complete functions, all while considering your comments and the code you’ve already written. This allows you to circumvent time consuming coding tasks and expedite the development process, mainly when working with unfamiliar APIs.

CodeWhisperer includes a feature that can identify and sort out code recommendations resembling those found in open source training data. It provides relevant details, such as the repository URL and licensing information of the associated open source projects. This makes it convenient for you to review these suggestions and ensure proper attribution when necessary.

Utilize CodeWhisperer to perform code scans that can identify elusive vulnerabilities within your codebase. Receive code recommendations for prompt remediation of these issues. Ensure that your coding practices align with recognized security standards, such as those set forth by the Open Web Application Security Project (OWASP), as well as best practices for crypto libraries and other relevant security guidelines.

Benefits of Amazon CodeWhisperer

A) Enhance Code Quality

CodeWhisperer is pivotal in elevating code quality through its comprehensive static analysis. It’s proficient in identifying bugs, vulnerabilities, and suboptimal coding practices within the source code, empowering developers to address these issues proactively preventing them from escalating into significant challenges. This proactive approach not only minimizes the occurrence of bugs but also shortens debugging periods, resulting in an overall improvement in software quality.

B) Boosts Productivity

CodeWhisperer excels at automating numerous repetitive and laborious tasks inherent in the development process. It offers a range of capabilities, including automatic refactoring, code suggestions, and code generation, effectively reducing the time and effort developers need to invest. This automation liberates developers to concentrate on tackling more intricate challenges and expeditiously crafting new features, ultimately enhancing their efficiency in software development.

C) Promotes Collaborative Work

CodeWhisperer offers a suite of features designed to streamline team collaboration. It enables developers to efficiently share and review code, simplifying the code review process. Furthermore, it grants the capability to establish coding standards and implement customized rules, ensuring that the code aligns with the team’s specific requirements.

D) Expedites the Learning Process

CodeWhisperer is a valuable resource for aspiring developers or individuals looking to master a new programming language. It furnishes contextual code hints, integrated documentation, and practical code samples, all contributing to a more profound comprehension of best practices and coding conventions. As a result, this accelerates the learning journey and empowers developers to acquire proficiency in new programming skills swiftly.

E) Encourages Adherence to Best Coding Practices

CodeWhisperer plays a pivotal role in cultivating sound coding practices among team members. Through its static analysis and code suggestions, it encourages developers to maintain uniform coding standards and avoid troublesome code patterns. This, in turn, contributes to creating code that is more readable, sustainable, and resilient in the long run.

Case Study: Solving Energy Sector Challenges with Generative AI

Veritis, in collaboration with a major energy company, undertook a project of significant impact, using Generative AI solutions to address complex operational challenges. The client aimed to enhance decision making, automate report generation, and gain deeper insights from vast volumes of industrial data. By deploying advanced GenAI models integrated into their cloud environment, including tools aligned with Amazon’s Generative AI offerings, Veritis significantly improved data processing, enhanced predictive maintenance, and reduced manual workload. This project is a beacon of hope, demonstrating the potential of Generative AI to bring about positive change in traditional industries, fostering innovation, efficiency, and smarter operations.

Read the complete case study: Solving Energy Sector Challenges with Generative AI.

Conclusion

Amazon’s Generative AI stands at the forefront of AI and machine learning innovation, offering businesses transformative capabilities that enhance productivity, creativity, and innovation across diverse industries. With the power to generate content ranging from text to code, this technology empowers organizations to streamline processes and foster new avenues for growth. The emergence of such advanced AI tools has opened doors for companies like Veritis to lead the charge in maximizing their potential.

With over 2 decades of cloud and AI solutions expertise, Veritis, a Stevie Award Winner, has consistently demonstrated its commitment to delivering top notch Generative AI AWS services. As an AWS Certified cloud consultant Azure Certified cloud consultant and GCP Certified cloud consultant, Veritis combines deep industry knowledge with 100% Client Satisfaction and a proven track record of success. By embracing these cutting edge technologies, businesses are empowered to redefine their operations, ensuring that creativity, efficiency, and operational excellence are seamlessly integrated into their strategic goals for a brighter, more innovative future.

Connect With Our Generative AI Team Today

Also Read:

- 6 Ways AIOps Optimizes Cloud Security

- AI Reshaping Future of Data Center Industry – Google Shows How?

- AIOPS Solutions: Enhancing DevOps with Intelligent Automation for Optimized IT Operations

- DevOps for AI: Make AI Operationalization a Core Business Objective!

- 10 Ways Artificial intelligence (AI) is Transforming DevOps

- All You Need to Know about Artificial Intelligence as a Service (AIaaS)