Apache Hadoop and Apache Spark are dominant technologies in big data processing frameworks for big data architectures. Both are at the epicenter of a rich ecosystem of open source platforms that handle, manage, and analyze massive data collections. However, organizations always doubt which technology to opt for big data Hadoop Vs Spark.

To add to the confusion, these technologies frequently collaborate and handle data stored in the Hadoop Distributed File System (HDFS). However, each is a different and separate entity with its benefits, drawbacks, and unique business applications. As a result, businesses often assess both of them for potential use in applications.

Most opinions revolve around optimizing large data environments for batch processing or real time processing centers on big data Hadoop Vs Spark. But that oversimplifies the variations between the two frameworks. At the same time, Hadoop and some of its components can now be used for workloads involving interactive querying and real time analytics.

While both Hadoop and Spark excel in processing massive datasets, they differ significantly in their architectures and use cases. Hadoop’s batch oriented processing is well suited for tasks that require fault tolerance and high throughput but may only sometimes demand real time responsiveness. On the other hand, Spark’s ability to cache data in memory and perform iterative computations efficiently makes it ideal for iterative algorithms, machine learning, and stream processing applications where low latency responses are crucial.

In this era, the choice between Hadoop and Spark depends on various factors, such as the nature of the data, processing requirements, and organizational objectives. While Hadoop remains a robust choice for batch processing and long running jobs, Spark’s speed and flexibility make it a preferred option for interactive analytics and emerging use cases demanding real time insights. Ultimately, the decision between Hadoop and Spark hinges on striking the right balance between performance, scalability, and ease of use to meet the requirements of each enterprise.

There is a wide range of solutions for big data frameworks processing in the current era. Additionally, many businesses provide specific enterprise features to accompany open source platforms. Many companies run both applications for significant data use cases. Initially, Hadoop was only suitable for batch applications. In contrast, big data Hadoop Vs Spark was initially created to perform batch operations faster than Hadoop.

Additionally, Spark applications are frequently constructed on top of HDFS and YARN resource management technologies. HDFS is one of Spark’s leading data storage choices but needs a file system or repository. Before deeply comparing Hadoop vs. Spark, let’s learn about Apache Hadoop and Apache Spark.

What is Apache Hadoop?

The term Hadoop was first coined by Mike Cafarella and Doug Cutting in 2006, and they started it to process a massive amount of data. Hadoop began as a Yahoo initiative and later became a top level Apache open source project. The acronym stands for High Availability Distributed Object Oriented Platform. That’s what Hadoop technology offers developers – high availability through the simultaneous distribution of object oriented tasks.

Apache Hadoop is an open source platform for storing and processing many data applications. It offers highly reliable, scalable, and distributed processing of big data storage solutions.

This Java based software can scale from a single server to thousands of devices, each providing storage and local computing. It offers the building blocks for developing different applications and services.

Hadoop is developed on clusters of commodity computers. It offers a cost effective solution for storing and processing a large volume of organized, semi structured, and unstructured data with no format restrictions. Hadoop is primarily built in Java and supports numerous languages, such as Perl, Ruby, Python, PHP, R, C++, and Groovy.

Useful link: ITIL vs DevOps: Can Both Concepts Work Together?

Apache Hadoop involves four main modules, and they are:

1) HDFS

Hadoop Distributed File System (HDFS) controls how big data frameworks sets are stored within a Hadoop cluster. It can even generate both structured and unstructured data. In addition, it offers high fault tolerance and high throughput data access.

2) YARN

YARN stands for Yet Another Resource Negotiator. YARN is Hadoop’s cluster resource manager that schedules tasks and distributes resources (such as CPU and memory) to applications using a cluster resource manager.

3) Hadoop MapReduce

Hadoop MapReduce divides large data processing frameworks into smaller ones, distributes the smaller tasks over various nodes, and then executes each task individually.

4) Hadoop Common (Hadoop Core)

Hadoop refers to standard tools and libraries that guide support to other modules, such as Apache Hadoop Framework, HDFS, YARN, and Hadoop MapReduce. Hadoop Core is often referred to as Hadoop Common.

Useful link: Understanding the Shift Left DevOps Approach

Benefits of Hadoop

Data defines how businesses can improve their operations. Many industries revolve around data collected and analyzed through multiple methods and technologies. Hadoop is one of the popular tools for extracting information from data, and it has advantages in dealing with big data frameworks. Let’s look at the most common benefits of Hadoop.

1) Cost

This technology is very economical; anyone can access its source code and modify it according to business needs. Hadoop offers cost effective commodity hardware to create a cost efficient model, unlike RDBMS, which requires costly hardware and high end processors to handle extensive data. The issue with RDBMS is that storing extensive data is not cost effective. As a result, the organization has begun to delete the raw data.

2) Scalable

Hadoop is a highly scalable tool that stores vast amounts of data from a single server to thousands of machines. Users can expand the cluster’s size without downtime by adding new nodes per requirement, unlike RDBMS, which can’t scale to handle the massive data. Hadoop has no limit restrictions on the storage system.

3) Speed

Hadoop operates HDFS to handle its storage, which maps data to any location on a cluster. Speed is a crucial factor when handling a massive amount of unstructured data. With Hadoop, it is possible to access terabytes of data in minutes and petabytes in hours.

4) Flexible

Hadoop is designed to access different datasets, such as structured, semi structured, and unstructured data, to generate value from those datasets. This means enterprises can use Hadoop software to extract business insights from data sources such as email and social media conversations.

5) Availability

The nature of Hadoop makes it available to everyone who requires it. The enormous open source community cleared the way for big data processing frameworks to be accessible.

6) Low Network Traffic

This application divides each task into smaller sub tasks in the Hadoop cluster, which are then assigned to each available data node. Each data node processes some data, leading to minimum traffic in a Hadoop cluster.

Useful link: Understanding the Differences Between Deep Learning and Machine Learning

What is Apache Spark?

Apache Spark is an open source platform for data processing frameworks that can quickly execute data science, data engineering, and machine learning operations on single node clusters. The Apache Software Foundation released Spark software to speed up the Hadoop computational computing software process. Spark uses Hadoop for processing and storage. Since Spark manages clusters independently, Spark uses Hadoop for storage purposes only.

Apache Spark supports numerous programming languages like Java, R, Scala, and Python. It includes libraries for a wide range of tasks, such as SQL, machine learning, and streaming, and it can be used anywhere from a laptop to a cluster of hundreds of servers. However, it typically runs quicker than Hadoop and processes data using random access memory (RAM) rather than a file system. Moreover, Spark can now handle use cases that Hadoop can’t perform.

Apache Spark is the only processing framework that involves artificial intelligence (AI) and data. It is the most significant open source project. This allows users to execute cutting edge machine learning (ML) and artificial intelligence (AI) algorithms after extensive data transformations and analysis.

Useful link: Comparison of AWS Vs Azure Vs GCP

Five Main Modules Involving Apache Spark

1) Spark Core

Spark Core underlays an execution engine that coordinates input and output (I/O) activities, schedules, and dispatches tasks.

2) Spark SQL

Spark SQL collects structured data information so users can improve structured data processing.

3) Spark Streaming and Structured Streaming

Spark Streaming and Structured Streaming can increase the capacity for stream processing. Spark Streaming gathers information from several streaming sources and splits it into micro batches for a continuous stream. Structured Streaming developed on Spark SQL decreases latency and makes programming easy.

4) Machine Learning Library (MLlib)

A group of scalable machine learning algorithms and tools for choosing features and constructing ML pipelines. The main API for MLlib is data frames, which offers consistency across numerous programming languages such as Python, Scala, and Java.

5) GraphX

GraphX is a user friendly, scalable computation engine that allows the interactive construction, editing, and analysis of graph structured data.

Useful link: Kubernetes Adoption: The Prime Drivers and Challenges

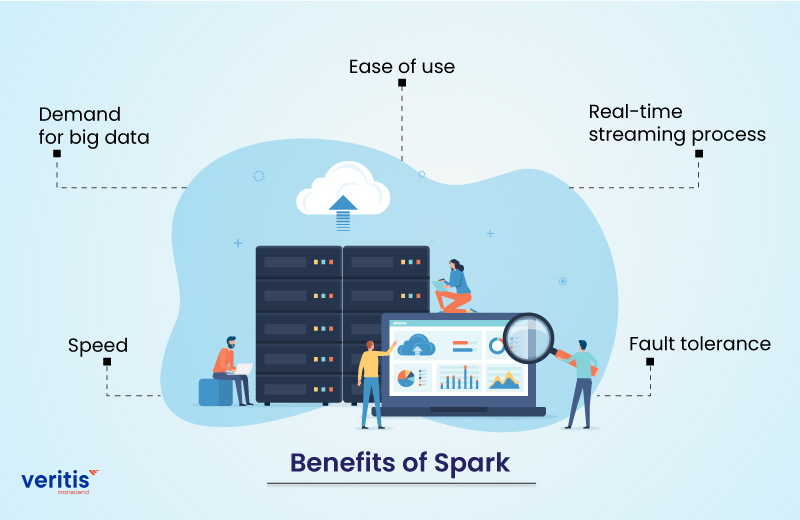

Benefits of Spark

Apache Spark can advance big data related business across industries. It has numerous benefits for dealing with big data, and let’s look at the most common benefits of Spark.

1) Speed

Processing speed is vital for big data. Because of its speed, Apache Spark is incredibly popular among data scientists. Spark is 100 times quicker than Hadoop architecture for processing massive data. It runs in a memory (RAM) computing system, while Hadoop Spark architecture uses local memory space to store data. Spark can process clustered data with over 8,000 nodes and many petabytes.

2) Ease of use

This open source application provides easy to use APIs for working with big data sets. It provides 80 high level operators that make it simple to create similar apps. We can reprocess the Spark code to join streams with historical data, operate ad hoc stream state queries, and do batch processing.

3) Demand for Big Data

A recent survey by IBM announced that it would train more than 1 million data scientists and engineers in Apache Spark. This is because it offers numerous opportunities for big data and is in high demand among developers.

4) Fault Tolerance

Apache Spark offers fault tolerance through Spark abstraction RDD. Apache Spark RDDs are created to manage the failure of any cluster worker node. As a result, it ensures that data loss decreases to zero.

5) Real time Streaming Process

Apache Spark includes a feature for real time streaming processes. Hadoop MapReduce can only manage existing data, not real time data. However, we can resolve this issue with Spark Streaming.

Useful link: Hadoop Vs Kubernetes: Is K8s invading Hadoop Turf?

Hadoop and Spark in 2025: Complementary, Not Competitive

Despite the rise of real time analytics, Hadoop remains a significant player, reassuring enterprises that their existing investments are not obsolete.

In 2025, many enterprises still rely on Hadoop for legacy batch processing and HDFS based storage, particularly in:

- Government and public sector for compliance heavy workloads

- Financial services for long term batch processing and data archiving

- Research and healthcare, where durable, fault tolerant storage is critical

At the same time, Spark has proven its adaptability, emerging as the go to engine for a wide range of analytics tasks, including real time, in memory, and iterative analytics, as well as AI and ML pipelines.

Most modern data architectures combine both technologies:

- Hadoop → Long term, scalable storage and batch computation (MapReduce)

- Spark → Fast in memory processing for streaming and interactive analytics

Enterprise Insight: It’s a common industry practice, with about 65% of enterprises using Hadoop and Spark in tandem, often in hybrid cloud or edge deployments, to balance historical processing and real time analytics.

Use Cases of Apache Hadoop and Apache Spark

Apache Hadoop Use Cases

1) Handling Large Datasets

Hadoop’s HDFS excels in managing massive datasets that exceed available memory capacity, enabling storage and processing of historical data, logs, and archives.

2) Data Warehousing and Data Lakes

Hadoop’s HDFS provides scalable storage solutions for constructing robust data warehouses and lakes.

3) Log Analysis and Extract Transform Load (ETL)

With Hadoop’s distributed capabilities, organizations can efficiently process, transform, and load vast log files from various sources.

4) Big Data on a Budget

Hadoop utilizes cost effective hard disks for storage, making it a budget friendly option for large scale data storage and processing, especially compared to Spark, which demands more memory.

5) Scientific Data Analysis

Hadoop’s parallel processing power enables the analysis of large scientific datasets on distributed clusters, including climate data, genetic sequences, and astronomical observations.

Apache Spark Use Cases

1) Real time Stream Data Analysis

Spark excels in processing real time streaming data, making it ideal for monitoring social media feeds, financial transactions, sensor data, or log streams.

2) Machine Learning Applications

Spark’s MLlib offers a comprehensive set of machine learning algorithms, facilitating the development of recommendation systems, fraud detection models, natural language processing (NLP), and predictive analytics. Its scalability and seamless integration support efficient ML pipelines.

3) Interactive Data Exploration

Leveraging Spark’s SQL like interfaces, users can rapidly uncover hidden patterns within large datasets for interactive exploration and swift prototyping.

4) Fraud Detection and Anomaly Identification

Spark’s streaming capabilities enable real time identification of suspicious activities, bolstering system security and mitigating financial losses.

5) Personalized Recommendations

Spark’s efficient processing of large datasets makes it an excellent choice for building accurate and dynamic recommendation systems in ecommerce or entertainment platforms.

Useful link: What are the Differences Between Amazon ECS vs EKS?

Comparison between Apache Hadoop and Apache Spark

Let’s look at the different parameters between Apache Hadoop and Apache Spark.

| Parameters | Apache Hadoop | Apache Spark |

| Cost | Hadoop runs at a low cost | Spark runs at a high cost |

| Performance | Hadoop is relatively slow because it stores data from numerous sources and uses MapReduce to process it in batches. | Spark is faster as it uses RAM |

| Data processing | It is ideal for linear data and batch processing | It is ideal for live unstructured data stream processing and real time processing. |

| Security | It is more secure. Hadoop runs various access control and authentication methods. | It is less secure. Spark improves security with shared secret authentication or event logging. |

| Efficiency | It is built to manage batch processing efficiently | It is built to manage real time data efficiently |

| Fault tolerance | It is a highly fault tolerance system. It uses the data that is replicated among the nodes in the event of a problem. | When a partition fails, it can recreate a dataset by tracking the construction of RDD blocks. To reconstruct data across nodes, Spark can also use a DAG. |

| Scalability | It is simple to scale by adding nodes and disks for storage | It is hard enough to scale because it depends on Ram for computations |

| Supports programming languages | Java, Perl, Ruby, Python, PHP, R, C++, and Groovy | Java, R, Scala, and Python |

| Machine Learning | It is relatively slow | It is faster with in memory processing |

| Category | It is the data processing engine | It is the data analytics engine |

| Latency | It has high latency computing | It has low latency computing |

| Scheduler | It requires an external job scheduler | It doesn’t require an external scheduler |

| Open source | Yes | Yes |

| Data integration | Yes | Yes |

| Speed | Low performance | High performance (100x faster) |

| Developer community support | Yes | Yes |

| Memory consumption | It depends on the disk | It depends on RAM |

Choosing the Right Tool: Trade Off and Use Case Framework

To make the right decision, consider performance, cost, ecosystem, and use cases:

Hadoop is ideal for:

- Batch warehousing and ETL pipelines

- Historical analytics and archival data

- Cost effective, disk based storage at scale

Spark is best for:

- Real time fraud detection and streaming analytics

- Machine learning pipelines with Spark MLlib

- Iterative computations like graph processing

- Hybrid Approach (Lambda Architecture):

- Hadoop stores historical data

- Spark processes both streaming and batch data for unified analytics

Example:

A financial services company might store 7 years of transactional data in Hadoop for compliance while using Spark for instant fraud detection and customer insights.

Big Data Trends for 2025: AI, Hybrid and Modern Toolchains

The big data landscape continues to shift toward real time intelligence and hybrid cloud deployments.

Key trends shaping Hadoop and Spark usage include:

1) AI and Machine Learning Integration

- Spark powers ML pipelines, predictive analytics, and graph algorithms

- AI driven fraud detection, recommendation engines, and IoT analytics are becoming standard

2) Hybrid and Edge Deployments

- Hadoop HDFS is still relevant for local, compliant storage

- Spark + Flink extends analytics to edge devices and IoT environments

3) Modern Data Toolchains

Tools like Delta Lake, Apache Iceberg, and Apache Kylin bring:

- ACID compliance for big data lakes

- BI and SQL query capabilities on Hadoop + Spark ecosystems

- Improved integration with cloud and lakehouse models

Forward Looking Strategy:

Enterprises, by leveraging Hadoop for durability and Spark for speed and intelligence, are not just preparing for but actively shaping the future of AI driven, real time decision making in 2025.

Implementation Roadmap: A Crucial Guide to Choosing Between Hadoop, Spark, or BothWhen building your big data architecture, follow this roadmap:

1) Assess Your Data Needs: Determine batch vs. real time requirements

2) Start with Storage: Use HDFS for cost effective, scalable storage

3) Layer Spark for Intelligence: Enable real time processing and ML pipelines

4) Consider Hybrid Cloud and Edge: Extend analytics to multi cloud and IoT

5) Optimize Continuously: Monitor performance, cost, and future scalability

Final Thoughts on Hadoop Vs Spark

Hadoop is excellent for processing multiple sets of massive amounts of data in parallel. Apache Hadoop architecture can store unlimited amounts of data in its cluster. It involves analytical tools such as HBase, MongoDB, Apache Mahout, Pentaho, and R Python.

Spark is suitable for analyzing real time data from multiple sources, such as sensors, the Internet of Things (IoT), and financial systems. Analytics can also target particular groups for machine learning and media campaigns. Spark has been tested 100 times quicker without modifying code than Hadoop Hive.

Apache Hadoop and Apache Spark have prominent analytics and extensive data processing features. With 2,000 developers from 20,000 organizations, including 80% of the Fortune 500, Apache Spark has a thriving and active community.

At the same time, Hadoop technology is implementing in multiple industries such as healthcare, education, government, banking, communication, and entertainment. As a result, there are clear enough for both to grow and numerous use cases for each of these open source technologies.

However, adopting both Hadoop and Spark technologies is laborious, so companies seek Vertis’s services. Veritis, the Stevie and Globee Business Awards winner, is a DevOps consulting company and IT consulting services provider that has been partnering with small to large companies, including Fortune 500 firms, for over a decade. We offer the best solutions for customers with world class experiences and cost effective solutions.